Voiceflow named a 2026 Best Software Award winner by G2

Read now

Testing your voice experience represents an essential step when designing conversations. You can evaluate your voice design from different angles, pinpoint flaws and inefficiencies, and determine whether it functions as intended. Whether you're running user testing with customers or reviews with stakeholders, frequent testing gives you a clearer sense of your design's direction and scalability and ensures the reliability of the application you are building. It saves you time, money, and extensive maintenance after deployment. But above all else, it helps establish confidence in your experience so users keep returning for more.

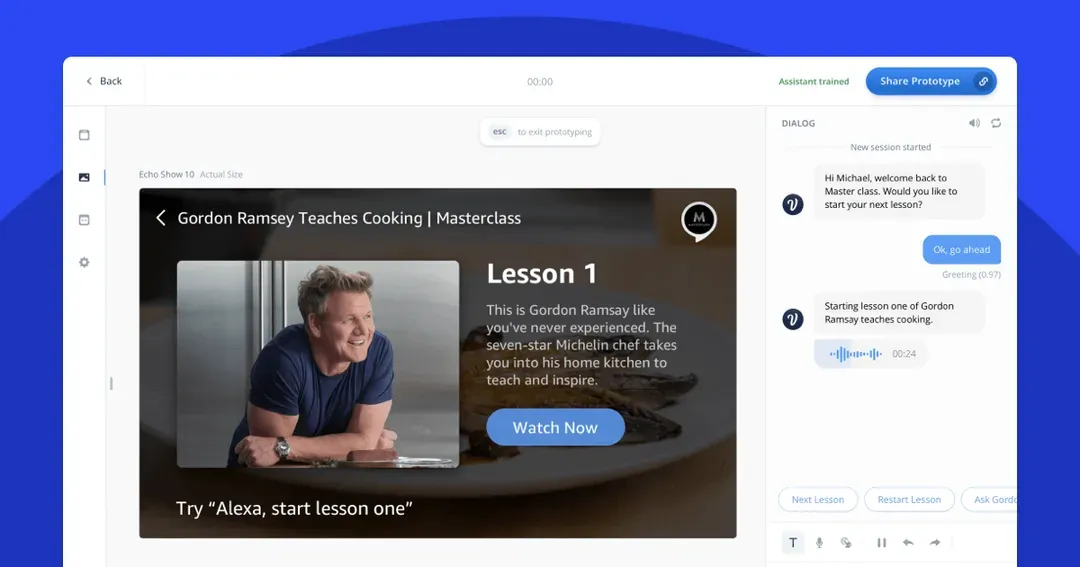

Outside of the value it promises, the success of any voice experience is gauged by its quality, ease-of-use, and customer reliability. At Voiceflow, we're determined to build upon our existing prototyping functionality to give you the toolkit you need to run extensive and efficient voice testing. That's why we're excited to introduce manual navigation and multimodal visuals within our test tool.

Voice testing is a process. There may be multiple paths you want to test, different slot values you'd like to try, or different intents you'd like to match. These all lead to the inevitable: restarting your experience from the start to try and achieve the desired result.

With the ability to start a test from any block, we've made it easier to test the part of the conversation you're focused on at any moment, so you can quickly jump in and test before continuing on with your design work.

We want to empower designers, developers, and creators to prototype faster and more efficiently while getting the user experience right. With new manual controls, freely navigate forward and backward to specific parts of your voice design to give you more granular control over the entire test experience. This also opens the door for future enhancements such as moderated tests with customers.

We want to give you the power to iterate faster, and so we're excited to release a robust prototyping process that will help push your designs from testing to deployment.

At Voiceflow, we want to stand behind a tool that encompasses all aspects of conversation design, including the rising demand for multimodal experiences. This is apparent now more than ever, with the wide-spread adoption and advancement of APL — Amazon's voice-first design language. APL allows you to create rich, interactive displays for Alexa skills so you can tailor your voice experience for all sorts of Alexa-enabled devices.

Voiceflow now makes it easy to prototype display elements in the test tool with the release of multimodal visuals. Now you can easily design and test for displays of varying sizes that help deepen the context of your voice experience. With multimodal visuals, get ready to experiment with graphics, images, and videos while building for a wide-array of Alexa devices like Amazon Echo Show and Fire TV.

Multimodal visuals is currently only available within Amazon Alexa projects. This functionality will be accessible across all channels in the coming months - so stay tuned for more updates!

You can access these features by navigating to the test button in the top right-hand corner of the Creator Tool.

Want to learn more?

Please visit our comprehensive changelog for more information on this release.