Voiceflow named a 2026 Best Software Award winner by G2

Read now

If you’re more of an audio-visual learner, we suggest tuning into our webinar on this topic (featuring Colin Guilfoyle, VP for Customer Support at Trilogy, who managed to automate over 60% of their customer support in under two months). Stick around for the full breakdown below.

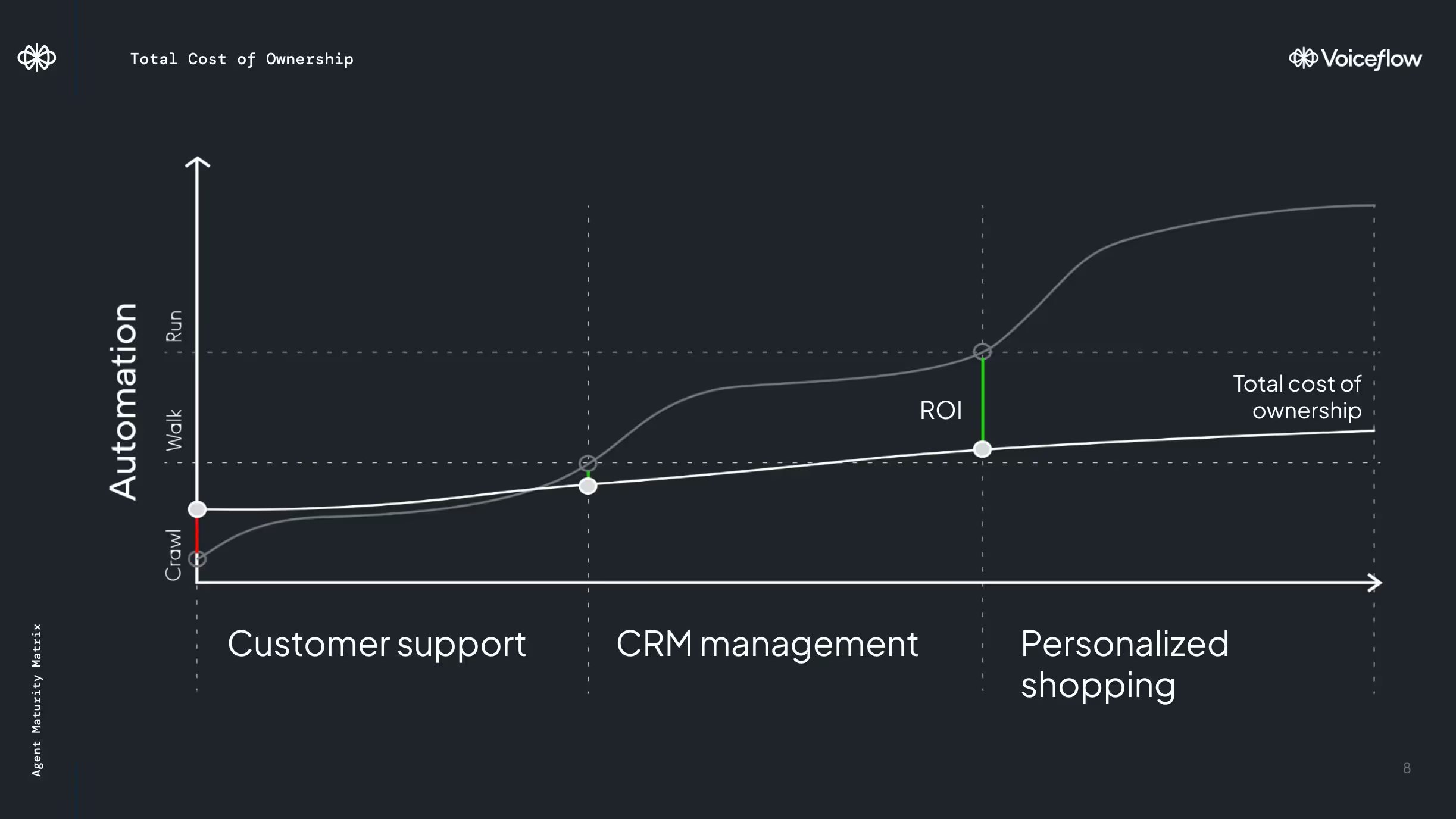

Starting with a simple agent is the surefire way to gain traction, before scaling to numerous agents and use cases. Because when you try to walk before you crawl or run before you walk, you’re going to trip over your own feet.

[Starting a new AI agent? We’ve got you]

But running before you walk isn’t as common an issue for most teams. Most companies struggle to move their first AI proof of concept (POC) out of production purgatory—it’s often called the cold start problem, named for the difficulty in starting old internal combustion engines when the gas is cold. Once the gas is hot, turning the engine on and off is a breeze. Similarly, once teams have launched one AI agent, they find it much faster and easier to expand to several agents or several use cases.

But you don’t have to take my word for it. Colin Guilfoyle, VP for Customer Support at Trilogy, has done it. His team started with one AI build—they call it the Atlas core, very Tony Stark-coded—and have used that build to expand to 90 customer support lines that handle AI support across products.

In order to scale production to this level and keep all their agents working smoothly once they got there, they needed to start with how they organized their team. Because there’s so many product lines that require support, their team is made up of code-focused, senior product specialists. These directly responsible individuals (DRIs) are given a subset of products to analyze each week, including how well the customer support automations and tickets are performing. Then, they replicate what goes right and refine what goes wrong—from refining knowledge base searches, training models, ticket raising, building the right retrieval-augmented generation (RAG), and integrating the right tools to solve specific product issues. They apply their best practices to their Atlas core, which they use as a foundation for building and expanding to new agents and products, and the process continues.

By effectively dividing and replicating their agents, while continually monitoring and improving them, Trilogy is on track to support 65% of their customer inquiries using AI agents. The next phase of their expansion includes replacing human support on L2 troubleshooting and automating customer changes in the system securely.

[Read more about how Trilogy automated 60% of their customer support in 12 weeks.]

While you may not have the budget to expand your team or want to create 90 agents, Trilogy’s approach to iterative improvement and replication is a wise one to consider when weighing costs. When it comes to scaling your agent, whether that’s launching more agents or expanding your agent’s current capabilities, there’s a lot you can do. Start with your minimal viable product (aka your single use case AI agent) and slowly layer in the use cases that expand its problem-solving. You’ll know it’s time to add new use cases when you’ve mastered the one you’re currently on. In fact, you’ll find the cost of ownership remains quite sustainable if you’ve built processes and integrated tools that are growing according to your needs.

As you scale, you’ll experience savings costs when it comes to human support hours. Trilogy reduced their human support hours by 60% after 12 weeks, freeing support staff to focus their efforts more efficiently. In the long run, scaling sustainably allows your support needs to grow as you do while saving your team the one resource they can’t get back—time.

People always ask me this question. And the answer is an unsatisfactory one—it depends. In the case of Trilogy, a large-scale enterprise that streamlines operations and support for hundreds of clients, they use a variety of tools ranging in cost, including:

Large companies with AI support across channels can spend over $100,000 on LLMs, tokens, and associated AI costs. Similarly, it can cost up to the same per year on Amazon Web Services. And that doesn’t include the cost of engineering a sophisticated support system that automatically generates and cross-checks AI responses across 90 agents.

When you’re talking about tooling, people, and time, it’s hard to make estimates about how much you should spend on AI agents unless we talk through the minute details of your circumstances. (Shameless plug for my colleague Peter Isaacs, who would be stoked to talk through your AI automation journey in painstaking detail.)

My advice is to talk to your technology and tooling vendors, ask colleagues in your field, and do a lot of research. We’ve also included a RAG cost estimation template for you to forecast costs for your next project.

The number of LLMs available has exploded in the last year. The influx of choices brings questions about which ones you should be using based on your use cases. There are five things you can do right now to understand models and choose the right ones.

Choosing the right LLMs requires thoughtful intention. Use RAG to provide context, making whichever LLM you choose more efficient and cost-effective. Different model versions work better for different tasks, so don’t be afraid to use older versions and cross-check responses to ensure quality and accuracy. You should be balancing the costs of prompt engineering against your models to help you achieve the best performance within your budget.

A graphics processing unit (or GPU) has made the modern world of AI possible. Compared to CPUs, GPUs have many smaller processing cores designed to work in parallel. As a result, LLMs and other Gen AI models use GPUs to perform massive mathematical and operational tasks quickly and simultaneously. Today, enterprise, consumer-grade GPUs serve multiple uses, from model building and low-level testing to deep learning operations, like biometric recognition.

We won’t go into all of the GPUs out there, because there are a bunch. But they are typically divided into three categories useful for enterprise:

The question is, do you need one? GPUs are expensive resources. For many, using proprietary models and a serverless approach gets them far enough in their AI journey to solve for most use cases. But for the folks interested in AI innovation and playing with bigger, faster, complex AI projects, a GPU has been a critical asset, leading to some supply challenges.

Choosing the right hardware for a use case is essential. It’s overkill to build a cluster of H100 GPUs to run a seven billion model inference. It takes a lot of engineering hours to host a model, optimize inference, batch queries, and put up guardrails to make it run efficiently. Instead of investing in a GPU—and spending months installing and deploying models-—my advice is to leave it to platforms until use cases and costs are better defined. When you’re building a large-scale AI operation, hiring a team to run innovation makes sense. But for most use cases, avoid the complexity and use CPUs and smaller models more often. Bigger isn’t always better.

The conversation around AI seems to center around avoiding risk and not getting left behind. It’s a pretty negative approach to an exciting and novel technology, and that affects how we evaluate the value of AI and budget for it. But a budget represents more than just money, it represents time, effort, and strategic thinking. Instead of thinking about all the ways things can go wrong, invite your teams (and even your leaders) to budget for:

It’s not too late to invest in the time, evaluation, and experimentation you need to succeed with AI. The most important problems aren’t the easiest ones to solve, but an organization that is forward-thinking about AI will see ROI faster than a reactive one.

Remember, you don't need to be Tony Stark to achieve results with AI. By starting small and scaling up, carefully budgeting for tools and technology, and prioritizing continuous learning and experimentation, you can make the most of your budget, no matter the size.