Voiceflow named a 2026 Best Software Award winner by G2

Read now

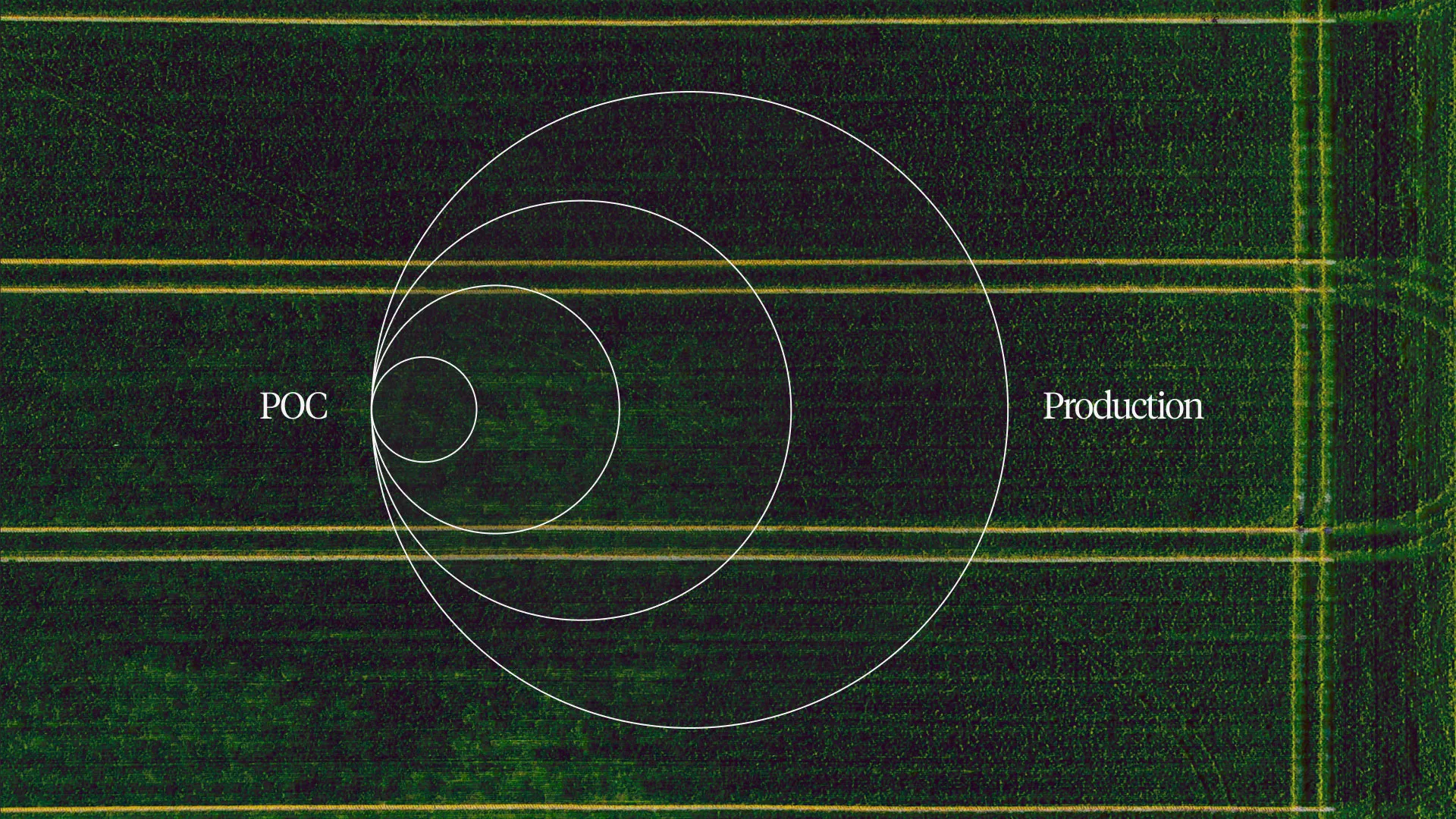

There's no shortage of AI agent POCs. Every company seems to have one. And according to MIT, most of them never ship beyond an internal team of testers.

The reasons vary. Sometimes the project loses executive sponsorship. Sometimes the team gets pulled onto other priorities. Sometimes the pilot just... stalls, with no clear path forward.

Some people blame the capabilities of AI itself, but after watching hundreds of teams go through this process, patterns emerge. The teams that ship do specific things differently. And the teams that stay stuck in proof-of-concept purgatory tend to make the same mistakes.

Here's what actually separates them.

This sounds obvious, doesn’t it? It isn't.

I've seen companies come in wanting to build an agent because AI is exciting. They want to innovate. They want to be seen as forward-thinking. But when you ask "what problem are you solving?" the answer is vague.

One example: a financial company wanted to build a financial advice agent. Sounds interesting. But they couldn't tie it to business impact. There wasn't a clear problem they were solving, and frankly, it was risky territory. The project got canned.

Here's the thing: they did have real problems. Their support volume was high. Customers were waiting. Agents were handling repetitive queries. That's a solvable problem with measurable impact.

But they skipped the obvious problem and went straight to the "innovative" one. That's a trap.

Teams that ship start with a clear problem: "We want to automate L1 support questions" or "We want to reduce wait times for account inquiries." Something concrete. Something measurable. Something the business actually cares about.

The teams that ship fastest often don't launch where you'd expect.

Turo didn't deploy their AI agent to replace their main customer support chatbot. They launched it on their "How It Works" page first, focused on new user education. Lower stakes. Easier to validate accuracy. "Instead of shipping the first chatbot to replace our existing solution, we shipped it somewhere else in the product," their team explained. "This allowed us to confirm we could answer questions accurately, that we could escalate when needed, and gain confidence in our approach."

StubHub International did something similar. They deployed their MVP only in the "My Account" section to control volume and ensure smooth adoption. English only. Core intents and FAQ handling. Once that foundation was solid, they expanded.

This is the "crawl, walk, run" approach. It sounds slower, but it's actually faster. You prove value quickly in a contained environment, build confidence internally, and earn the right to expand. Teams that try to boil the ocean on day one usually stall.

But before you write a single prompt, you should know what value means for your team.

Start with defining the high-level metrics that the business cares about: containment rate, resolution time, customer satisfaction, escalation rate, cost per interaction. These are your north stars that will guide you from a small idea to a big return.

Teams that stall often build first and figure out measurement later. By then, it's hard to prove impact, which makes it hard to get resources, which makes it hard to ship, which makes it impossible to scale.

There's a balance between testing enough and testing forever.

I see teams get stuck in endless internal QA cycles. They're trying to catch every edge case before launch. They're polishing prompts that haven't seen real users yet. They're optimizing for scenarios that might never happen.

Meanwhile, they're not learning from real conversations. And real conversations are the only thing that actually tells you what to fix.

That doesn't mean you ship blind. Turo tested against a suite of 40-50 must-handle questions and validated accuracy, relevance, clarity, and completeness before each release. They went through seven iterations in two months. But they did it fast, and they got to real users quickly.

Sanlam Studios built and threw away four or five agents before landing on something that worked. That's not failure. That's the speed you need to learn.

Ship earlier than feels comfortable. Test the POC against your north star metrics and be honest about what’s working and what isn’t. Then get it in front of real customers; you'll learn more in a week of real traffic than a month of internal testing.

The teams that ship aren't just conversation designers or just engineers. They're cross-functional.

But that doesn't mean you need a huge team. One of our customers runs their agent program with a PM and an engineer. That's it. They handle tens of thousands of conversations a week. But the agent is their baby. It's their remit to improve it constantly. They review transcripts. They tweak prompts. They own it.

What matters isn't headcount. It's having people who cover the key bases:

These might be three people or one person wearing multiple hats. The point is that when these functions work together from the start, you move faster. When they're siloed, or when no one owns the experience at all, you get handoffs, delays, and misalignment.

This is the most underrated thing that separates successful deployments from failed ones.

Teams that ship treat their agent like a product. They leverage the capabilities of a cross-functional team and invest in making the product shine because they own it. They review transcripts. They run evaluations. They make prompt improvements. They build new integrations and maintain existing ones with Engineering. The agent evolves as the business evolves.

Teams that fail treat it like a project. They build the thing, launch it, and move on. No one's reviewing conversations. No one's improving prompts. Knowledge gets stale. Performance degrades. Then the complaints start rolling in from customers and other parts of the business. By the time someone notices, the agent has been annoying customers for months and they’re playing a game of catch-up.

It's like asking an employee to never learn a new skill while the company around them evolves and changes. Eventually, they're useless and it ends up costing more money to replace them.

If you're not prepared to staff a team that owns the agent experience ongoing, you're not prepared to ship.

And there’s nothing worse than a team that’s ready to ship only to get blocked by compliance in the final hour. If you're in a regulated industry, you know the challenges. Don't wait until the end to involve legal and compliance. Get them in the room from the start.

Sanlam Studios, building an AI financial coach inside a 106-year-old financial institution, made this a priority. "From the first meeting, I told them exactly what our vision was, how we'd do it, and how they could partner with us in the process," their COO explained.

Their approach: set the benchmark for success at the same level as a human advisor. Human advisors make mistakes. AI makes mistakes. The people who build agents make mistakes. Hold everyone to the same standard, but not perfection.

This framing matters. If you position AI as needing to be flawless, you'll never ship. If you position it as needing to be as good as (or better than) the current alternative, you have a defensible path forward.

Teams that bring Compliance in late often get blocked at the finish line. Teams that bring them in early make them partners in the process. And the teams that truly win treat all of their stakeholders like Compliance.

So, the teams that ship have identified a real problem, focused on a small use case they can solve, tested quickly, built a cross-functional team that owns, and gotten buy-in from necessary stakeholders. Now what?

Teams that win ship products so they can learn, not just to check a box. Their foundations have been laid, but it’s time to discover where the value actually lies — and it’s usually with their customers. The best teams create a circular learning loop:

This sounds simple, but most teams don't do it. They build based on assumptions, not data. They don't have systems in place to learn from conversations at scale.

Tools like conversation evaluations can help you see patterns across thousands of interactions. Where are customers getting stuck? What questions are you not handling well? What should you automate next?

That learning loop is what gets an idea out of POC purgatory and into a product that starts delivering real value.

If your POC is stuck, ask yourself:

The teams that ship aren't necessarily smarter or better resourced. They're more focused. They start with real problems. They measure what matters. They build teams that own the experience. And they learn from real traffic instead of theorizing.

That's it. It's not magic. It's discipline.

We help teams ship excellent AI customer experiences. Let’s work together to get your product out of POC purgatory.