Voiceflow named a 2026 Best Software Award winner by G2

Read now

![GPT-5 Is Here: What You Need To Know [2026]](https://cdn.prod.website-files.com/6995bfb8e3e1359ecf9c33a8/6995bfb8e3e1359ecf9c4e52_66676b72d8be00360eeef33d_AI%2520Basics.webp)

OpenAI unveiled GPT-5 on August 7, 2025, presenting it as the long-awaited successor to GPT-4.

The launch was not flawless. A slide packed with confusing benchmarks quickly drew criticism and even became a meme before Sam Altman acknowledged it as a ‘mega chart screw up.’

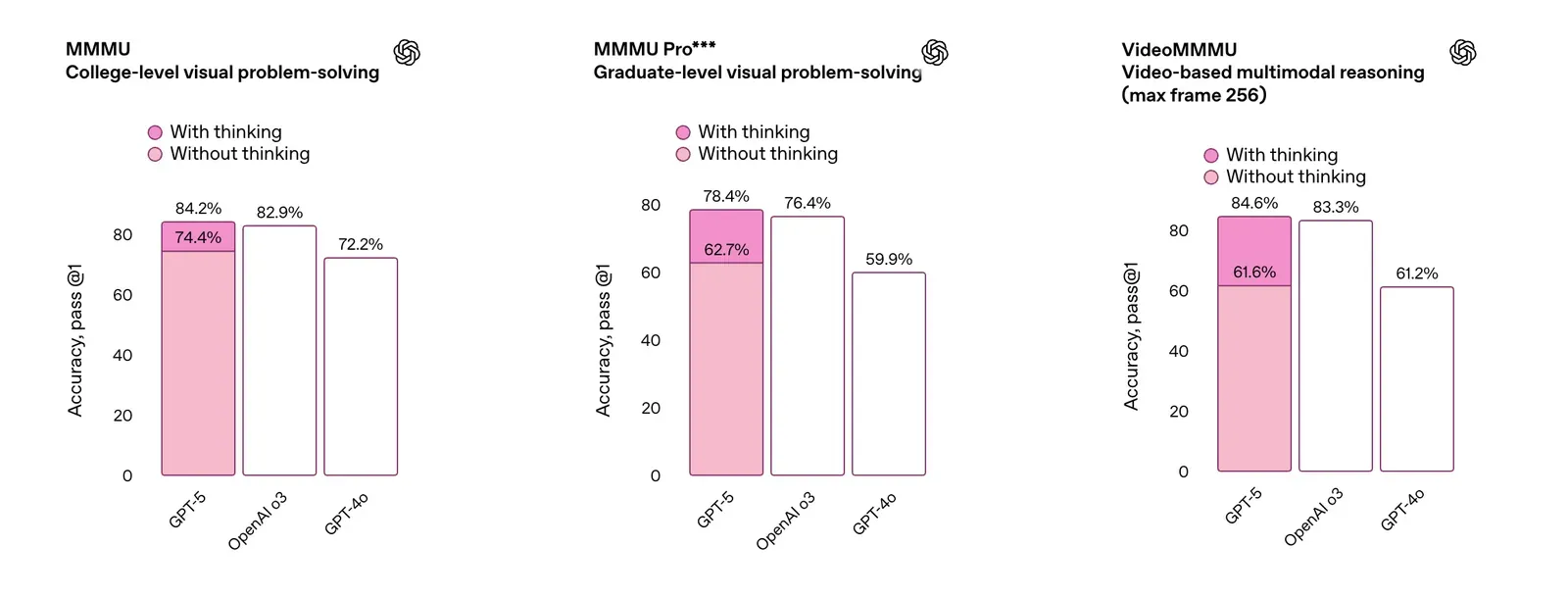

The misstep, however, did not overshadow the larger story. GPT-5 is the first model to merge speed and intelligence into a single system. Earlier releases such as GPT-4o, o1, and o3 delivered steady progress but often forced users to choose between quick answers and deeper reasoning. GPT-5 removes that trade-off by adapting on the fly, offering rapid responses to simple queries and more deliberate reasoning for complex ones.

Despite this, GPT-5’s arrival has been met with a mix of excitement and criticism from developers and users alike. This guide provides a detailed breakdown of GPT-5, including its core capabilities, performance, and the nuanced community reception.

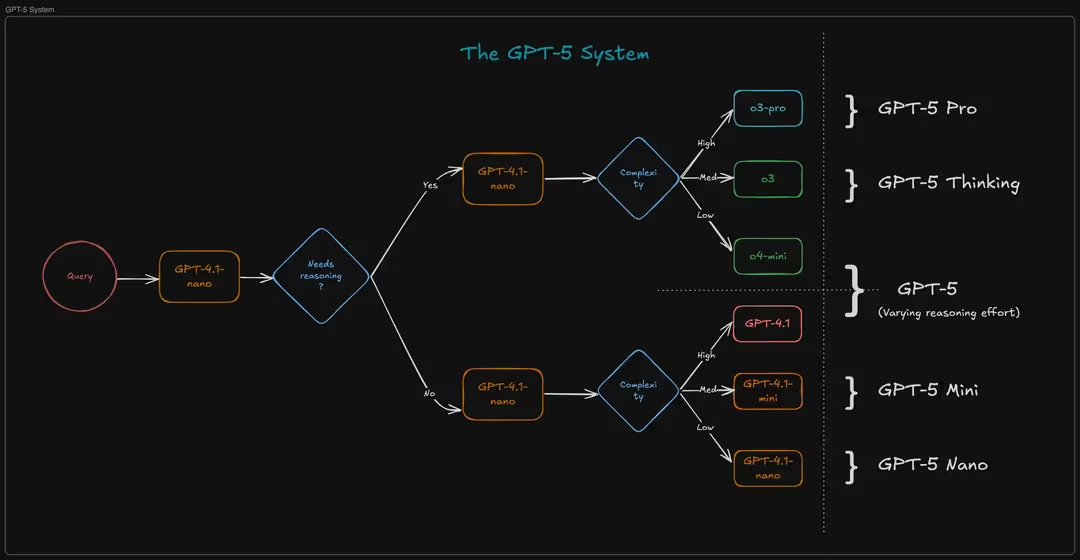

GPT-5 is not a single model but a system of different models working together through a real-time “router”. The system automatically selects the best model for a given task, balancing speed and complexity. This includes a fast, default model for routine queries and a more powerful "Thinking" or "Pro" variant for complex, multi-step problems.

GPT-5’s multi-model routing system dynamically allocates compute power, ensuring that a simple question doesn't waste resources while a complex query receives the necessary depth of analysis. The system is composed of several variants:

Some of GPT-5’s advancements include:

The user request for a comparison with "GPT-4.5" points to a short-lived but important model in OpenAI's history. Codenamed "Orion," GPT-4.5 was an intermediate model released on February 27, 2025, and subsequently retired with the launch of GPT-5. The core difference between the two lies in their fundamental approach to intelligence:

While GPT-4.5 garnered praise for its conversational style and reduced hallucination rates compared to GPT-4o, its performance on complex reasoning benchmarks was significantly lower than that of GPT-5.

{{blue-cta}}

Access to GPT-5 is tiered based on user type and subscription plan. For developers, it is available via the OpenAI API in three distinct models: gpt-5, gpt-5-mini, and gpt-5-nano, each optimized for different price and performance requirements. The costs are based on token usage.

For ChatGPT users, the cost is bundled into the monthly fee: The Plus subscription remains at $20 per month, providing access to GPT-5. The most powerful variant, referred to as "GPT-5 Pro" in the user interface, is rumored to be available on a separate, more expensive tier for select users.

The context window is a critical feature, and GPT-5's implementation has been a major point of discussion within the community. The API offers the largest context window, with a maximum of 400,000 tokens per request (272,000 input + 128,000 output). In the ChatGPT interface, the context window depends on the user's subscription: Free Tier users get 8,000 tokens, Plus users get 32,000 tokens, and Pro and Enterprise users have a 128,000-token window.

Beyond its core features, GPT-5's capabilities have been tested and verified across a range of applications:

The release of GPT-5 has generated a wide range of opinions, with users, developers, and journalists all weighing in on its strengths and weaknesses. The consensus is far from unified, with reactions often dependent on a user's specific needs and workflow.

For those in technical fields, the improvements are undeniable. As reported by Wired, GPT-5 is "a flagship language model," with performance gains that show up in everyday use. The CEO of Cursor, a coding platform, declared on a live stream that GPT-5 is the smartest model his team has ever seen. Its ability to generate full-stack applications and debug complex codebases has been a significant win for developers.

In addition, the new, single-model system has been praised for simplifying the user experience. Instead of manually choosing between different model variants, the system dynamically routes queries. CNET found that while the improvements were "subtle," GPT-5's outputs were "a little sharper, a little more polished and a little more helpful." This is particularly noticeable in data analysis and visual tasks, where GPT-5's presentations are more organized and insightful.

For many users, particularly those who rely on the model for creative writing or casual conversation, the change in tone was a major source of frustration. The new model, with its emphasis on safety and accuracy, was widely described on Reddit as "robotic" and less spontaneous. As reported by the Economic Times, OpenAI had to quickly update GPT-5's personality to make it "warmer and friendlier" after users complained of its "cold, corporate tone."

Furthermore, Tech critic Ed Zitron was particularly harsh, arguing that the removal of model choices and the new, seemingly arbitrary rate limits for "thinking" messages, suggesting that "meaningful functionality… is being completely removed for ChatGPT Plus and Team subscribers." Equally, despite OpenAI's claims, some critics, including PCMag's Ruben Circelli, argue that GPT-5 is an "insignificant update." He wrote that while it has some upgrades, it "doesn't solve the problems that actually matter" and that he has not "noticed a significant improvement" in areas like hallucination reduction.

{{blue-cta}}

The data suggests that GPT-5 is a significant improvement on complex reasoning, problem-solving, and coding, but some users have reported that it feels less creative or “human” than earlier models. The "right" model depends on your use case.

GPT-5’s new architecture is designed to "think" longer for complex queries, which can lead to increased latency. In live contact center interactions, speed is non-negotiable. The solution is to use a system that intelligently routes simple questions to a faster, lower-cost model and only engages the deeper GPT-5 reasoning for hard problems.

This is a common pain point. While GPT-5 has an expanded context window, user-level issues can still occur. A well-designed agent with a clear workflow and guardrails can enforce context and instructions, ensuring the model stays on track.

This is a widely reported frustration. While internal tests show a significant reduction in hallucination rates, real-world performance can vary. Building a robust agent includes implementing a retrieval-augmented generation (RAG) system that grounds the AI’s responses in your company’s verified knowledge base, minimizing the risk of incorrect or fabricated answers.

You’re not stuck with a single vendor. GPT-5 is strong at structured reasoning and tool use, but some users find it too “cold” in tone, too verbose, or less creative than earlier models. If that’s the pain point, there are real alternatives:

Absolutely. Contact centers are where GPT-5’s mix of speed and reasoning really shines. The obvious win is summarization. After a long back-and-forth with a customer, GPT-5 can produce a clean, accurate recap that’s ready for CRM notes or escalation teams. The model’s improved honesty also means fewer “hallucinated” details compared to older versions, which matters when you’re logging compliance-sensitive information.

A quick tip: For a seamless GPT-5 experience in your contact center, consider a platform like Voiceflow. It allows you to integrate GPT-5 with your existing systems and provides the robust tools needed to build, test, and deploy AI agents at scale, all without needing to write a single line of code.

That’s a common frustration. GPT-5 gives you much higher context and message limits, which is great for long conversations, but it also means a single “fix this one thing” request can chew through more tokens than you’d like. The trick is to be specific and incremental. Instead of sending the whole transcript back every time, frame your follow-up as “based on the last answer, change X to Y” so the model only has to reprocess the delta.

Another tactic is to set rules up front. For example, tell GPT-5: “Always keep responses concise, and only rewrite the section I point out.” This way, the model self-limits rather than giving you a fresh essay each time. And if you’re building on top of GPT-5 for production use, you can wrap it in a prompt-management layer that automatically trims context, summarizes history, or re-anchors the conversation so you’re not paying for unnecessary re-tokens. In short, lean on structure and guardrails to get the efficiency benefits of the bigger window without feeling like you’re burning prompts on small tweaks.